OpenAI API Integration Services

Pharos Production delivers expert OpenAI API integration for enterprises building intelligent products. Our team works with GPT-4, GPT-4o, o3, Assistants API, function calling, embeddings and fine-tuning to build production-grade AI systems with proper guardrails and cost controls. We help companies move beyond prototypes to robust, scalable OpenAI deployments. This includes prompt engineering, token optimization, structured output parsing, content moderation layers, fallback strategies and usage monitoring. Whether you need a customer-facing chatbot, internal knowledge assistant, document processing pipeline or AI-powered workflow automation, we architect solutions that balance capability with cost. Pharos Production brings deep experience in enterprise AI deployment - rate limiting, PII filtering, audit logging, SOC 2 compliance and multi-region failover. We build the infrastructure around OpenAI APIs that turns experimental AI into reliable business tools.

- 20+ OpenAI integrations

- 12+ AI engineers

- 8+ models in production

- 25+ AI projects delivered

- 90+ engineers

- 90+ Clutch reviews

Enterprise-grade AI with responsible governance, data privacy and production-ready deployment

What is OpenAI API integration?

What we build with OpenAI API Integration

Customer support chatbots

GPT-4o powered support agents with RAG retrieval, function calling for order lookups and ticket creation, escalation to humans and multi-language support.

Document analysis and extraction

Automated contract review, invoice processing, compliance checking and structured data extraction from PDFs, images and scanned documents using GPT-4 Vision.

Code generation and review

AI-assisted development tools - code generation, refactoring suggestions, PR review automation, documentation generation and test case creation.

Content generation pipelines

Automated SEO content, product descriptions, email copy and marketing materials with brand voice fine-tuning, fact-checking and human approval workflows.

Semantic search and embeddings

text-embedding-3 powered search across knowledge bases, product catalogs and internal documentation with hybrid retrieval and reranking.

Workflow automation agents

Assistants API agents that manage CRM updates, schedule meetings, process expense reports and orchestrate multi-step business workflows via function calling.

OpenAI vs Anthropic vs open-source LLMs

| Factor | OpenAI | Anthropic / Open-source |

|---|---|---|

| Model range | GPT-4o, o3, o4-mini, GPT-4 Vision, DALL-E, Whisper | Anthropic: Claude 3.5/4. Open-source: Llama, Mistral |

| Reasoning | o3 for complex reasoning, math, code | Anthropic: extended thinking. Open-source: limited |

| Multimodal | Vision, audio (Whisper), image gen (DALL-E) | Anthropic: vision only. Open-source: varies |

| Function calling | Native JSON mode, parallel tool calls | Anthropic: tool use. Open-source: limited |

| Fine-tuning | GPT-4o fine-tuning with custom datasets | Anthropic: none. Open-source: full control (LoRA) |

| Enterprise | Azure OpenAI, SOC 2, data privacy API | Anthropic: AWS Bedrock. Open-source: self-hosted |

| Pricing | Pay-per-token, batch API for 50% discount | Anthropic: similar. Open-source: infra cost only |

Pharos Production recommends OpenAI for projects needing the broadest model range, multimodal capabilities and fine-tuning. Anthropic Claude excels in safety-critical and long-context tasks. Open-source models suit data-sensitive workloads requiring full control.

Limitations: OpenAI APIs have rate limits that require queuing and retry logic for high-throughput applications. Token costs scale linearly with usage - batch processing and caching strategies are essential. Fine-tuning requires high-quality training data (minimum 50-100 examples). Latency for o3 reasoning models can reach 30-60 seconds per request. Data sent to OpenAI APIs is processed externally - for regulated industries, consider Azure OpenAI with private endpoints.

OpenAI Integration Benchmark 2026

Proprietary research based on 20+ OpenAI integration projects delivered by Pharos Production. Dataset covers chatbots, document processing, RAG systems and AI agents. Methodology (Pharos Verified Delivery): aggregated delivery metrics with token usage analytics and response quality benchmarks. Full report available on request.

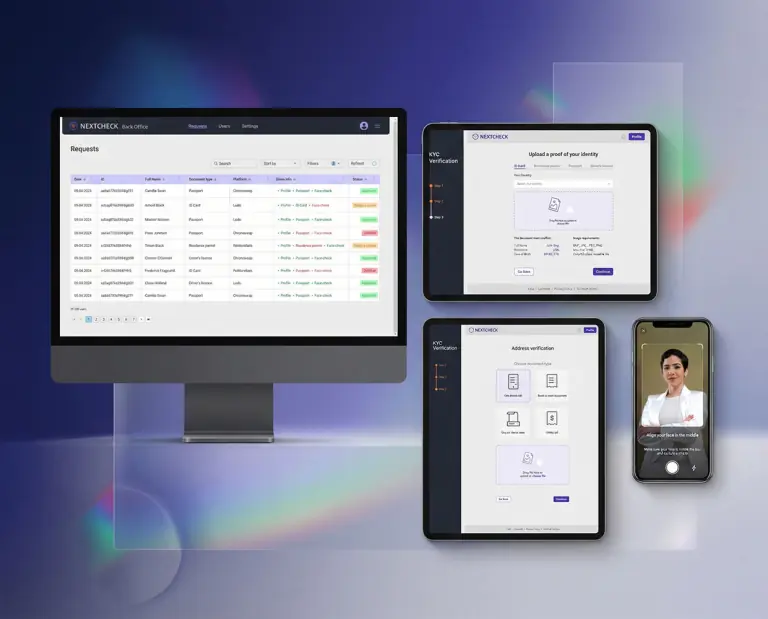

OpenAI API Integration projects we delivered

- OpenAI API pricing scales unpredictably with usage - a GPT-4o application processing 10,000 requests per day can cost $3,000-$8,000 monthly in API fees alone, and cost spikes from prompt injection or retry loops can blow budgets overnight.

- OpenAI enforces strict rate limits (TPM and RPM) that throttle production applications during peak traffic - hitting rate limits returns 429 errors that degrade user experience, and higher tier limits require spending commitments.

- Model deprecation cycles force mandatory migrations every 12-18 months - OpenAI retires older model versions with 6 months notice, requiring prompt re-tuning, regression testing and output validation against the replacement model.

- All data passes through OpenAI servers with no self-hosting option - enterprises in regulated industries (healthcare, finance, government) face compliance risks, and OpenAI data processing terms may conflict with GDPR, HIPAA or internal data governance policies.

- OpenAI GPT-4o processes text, images and audio in a single API call, enabling multimodal enterprise applications.

- Function calling and JSON mode enable reliable structured output for integration with business systems and databases.

- The Assistants API provides managed state, file retrieval and code execution - reducing custom infrastructure by 60-70%.

- Pharos Production has delivered 20+ OpenAI-powered projects including chatbots, document processing and AI agents.

- An OpenAI integration MVP starts from $25,000-$50,000 and takes 6-10 weeks depending on RAG complexity and integrations.

Reviews

Independent reviews from Clutch, GoodFirms and Google - verified client feedback on our software projects

Based on 8 verified client reviews

Frequently asked questions

Type to filter questions and answers. Use Topic to narrow the list.

Showing all 5

No matches

Try a different keyword, change the topic, or clear filters

-

We use prompt compression, semantic caching (Redis + embeddings), batch API for non-real-time workloads (50% cost savings), model routing (GPT-4o-mini for simple tasks, GPT-4o for complex ones) and token budget monitoring with alerts.

-

Yes with proper architecture. We use Azure OpenAI for data residency requirements, implement PII filtering before API calls, add audit logging for compliance and configure data retention policies.

OpenAI API data is not used for training by default.

-

Yes. We fine-tune GPT-4o with your domain data to improve accuracy, reduce prompt length and lower per-request costs.

Fine-tuning works best with 100+ high-quality examples and measurable evaluation criteria.

-

We implement exponential backoff, request queuing with priority lanes, token bucket rate limiting, fallback to secondary models (Claude, open-source) and usage dashboards with budget alerts.

-

Chatbot MVPs start from $25,000-$50,000. Document processing systems range from $40,000 to $100,000.

Enterprise AI platforms with multi-agent orchestration cost $80,000 to $200,000+.

Choose your cooperation model

Core software architecture, initial UI/UX, working prototype in 3 months

Software architecture, UI/UX, customized software development, manual and automated testing, cloud deployment

Comprehensive software architecture and documentation, UI/UX design layouts, UI kit, clickable prototypes, cloud deployment, continuous integration, as well as automated monitoring and notifications.

Prices vary based on project scope, complexity, timeline and requirements. Contact us for a personalized estimate.

An approach to the development cycle

-

Team Assembly

Our company starts and assembles an entire project specialists with the perfect blend of skills and experience to start the work.

-

MVP

We’ll design, build, and launch your MVP, ensuring it meets the core requirements of your software solution.

-

Production

We’ll create a complete software solution that is custom-made to meet your exact specifications.

-

Ongoing

Continuous Support

Our company will be right there with you, keeping your software solution running smoothly, fixing issues, and rolling out updates.

Partnerships & Awards

Recognized on Clutch, GoodFirms and The Manifest for software engineering excellence

Build with OpenAI API Integration

90+ engineers ready to deliver your OpenAI API Integration project on time and within budget

What happens next?

-

Contact us

Contact us today to discuss your project. We’re ready to review your request promptly and guide you on the best next steps for collaboration

Same day -

NDA

We’re committed to keeping your information confidential, so we’ll sign a Non-Disclosure Agreement

1 day -

Plan the Goals

After we chat about your goals and needs, we’ll craft a comprehensive proposal detailing the project scope, team, timeline and budget

3-5 days -

Finalize the Details

Let’s connect on Google Meet to go through the proposal and confirm all the details together!

1-2 days -

Sign the Contract

As soon as the contract is signed, our dedicated team will jump into action on your project!

Same day

Our offices

Headquarters in Las Vegas, Nevada. Engineering office in Kyiv, Ukraine.