AWS AI Development Services

Pharos Production delivers AWS AI development services for enterprises building machine learning systems on Amazon Web Services. Our team works with SageMaker, Bedrock, Lambda, Step Functions, Rekognition, Comprehend and Textract to build scalable AI solutions. We architect end-to-end ML pipelines on AWS - data ingestion with Kinesis and Glue, feature engineering with SageMaker Processing, model training on managed GPU instances, deployment with SageMaker Endpoints and monitoring with CloudWatch. AWS Bedrock gives enterprises access to foundation models (Claude, Llama, Titan) with enterprise security and VPC isolation. Pharos Production brings AWS-native AI expertise - serverless inference with Lambda, cost optimization through spot instances and auto-scaling, multi-model endpoints, A/B testing and compliance configurations for HIPAA, SOC 2 and PCI DSS workloads.

- 10+ AWS AI projects

- 12+ AI engineers

- 6+ Bedrock models used

- 25+ AI projects delivered

- 90+ engineers

- 90+ Clutch reviews

Enterprise-grade AI with responsible governance, data privacy and production-ready deployment

What is AWS AI development?

What we build with AWS AI

SageMaker ML pipelines

End-to-end ML workflows with SageMaker Pipelines - data processing, training, evaluation, model registry and automated deployment with approval gates.

Bedrock foundation model apps

Enterprise AI applications using Claude, Llama, Titan via Bedrock with Knowledge Bases (RAG), Agents and Guardrails for safe, grounded responses.

Serverless AI with Lambda

Event-driven AI processing - image analysis triggers, document processing queues, real-time prediction APIs and chatbot backends without server management.

Computer vision with Rekognition

Image and video analysis - facial recognition, content moderation, object detection, text extraction and custom label detection for industry-specific use cases.

Document intelligence with Textract

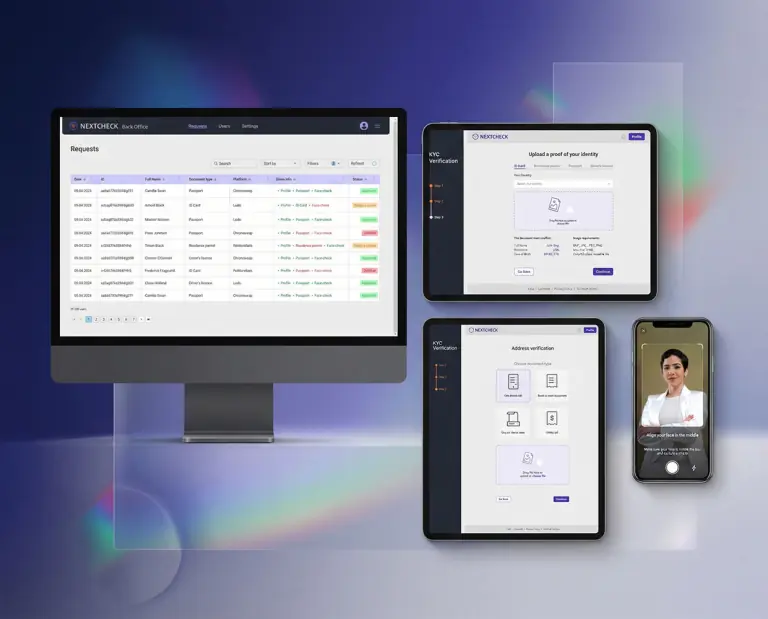

Automated document processing - invoice extraction, form parsing, table extraction and identity document verification with high accuracy OCR.

Real-time ML inference

Low-latency prediction endpoints with SageMaker real-time inference, multi-model endpoints, auto-scaling and A/B testing for model versions.

AWS AI vs Azure AI vs Google Vertex AI

| Factor | AWS AI | Azure AI / Google Vertex AI |

|---|---|---|

| ML platform | SageMaker - most comprehensive managed ML | Azure: Azure ML. Google: Vertex AI |

| Foundation models | Bedrock: Claude, Llama, Titan, Mistral | Azure: Azure OpenAI (GPT). Google: Gemini |

| Serverless AI | Lambda + Step Functions, mature ecosystem | Azure: Functions. Google: Cloud Functions |

| Pre-built AI | Rekognition, Comprehend, Textract, Polly | Azure: Cognitive Services. Google: Vision, NLP |

| Market share | Largest cloud market share (31%) | Azure: 25%. Google: 11% |

| Cost optimization | Spot instances, Savings Plans, Inferentia | Azure: Reserved VMs. Google: preemptible VMs |

| Enterprise adoption | Most enterprises, strongest partner ecosystem | Azure: Microsoft shops. Google: data-heavy orgs |

Pharos Production recommends AWS AI for organizations already on AWS, teams needing the broadest range of managed AI services and projects requiring Bedrock multi-model access. Azure AI suits Microsoft-centric enterprises. Google Vertex AI excels for BigQuery-integrated analytics and Gemini-first architectures.

Limitations: AWS AI services have a steeper learning curve than competitors due to the breadth of options (choosing between 20+ AI services). SageMaker pricing is complex with separate charges for training, hosting, storage and data transfer. Bedrock model availability can lag behind direct API access from model providers. Some AWS AI services (Rekognition, Comprehend) have region availability limitations.

AWS AI Development Benchmark 2026

Proprietary research based on 20+ AWS AI and ML projects delivered by Pharos Production. Dataset covers SageMaker pipelines, Bedrock integrations, Lambda AI functions and managed AI services. Methodology (Pharos Verified Delivery): aggregated delivery metrics with AWS cost and performance data. Full report available on request.

AWS AI projects we delivered

- AWS AI pricing is complex and unpredictable - SageMaker charges for notebooks, training, endpoints, storage and data transfer separately, and a misconfigured endpoint left running overnight can generate thousands of dollars in unexpected costs.

- Vendor lock-in is severe - SageMaker Pipelines, Feature Store and Model Monitor use proprietary APIs with no portable equivalent, making migration to Azure or GCP a multi-month engineering effort.

- Bedrock model availability lags behind direct API providers - new Claude and Llama versions appear on Bedrock weeks or months after their original release, and some model configurations (extended context, fine-tuning) may never be supported.

- AWS region availability for AI services is uneven - SageMaker and Bedrock features launch in us-east-1 first and may take 6-12 months to reach EU or APAC regions, creating compliance issues for data residency requirements.

- AWS holds 31% cloud market share with the broadest range of managed AI services - from SageMaker to Bedrock to 15+ pre-built AI APIs.

- Amazon Bedrock provides enterprise access to Claude, Llama, Titan and Mistral with VPC isolation and no data sharing with model providers.

- SageMaker reduces ML infrastructure management by 80%, letting teams focus on model development rather than cluster provisioning.

- Pharos Production has delivered 20+ AWS ML projects including SageMaker pipelines, Bedrock apps and serverless AI systems.

- An AWS AI project starts from $35,000-$70,000 and takes 8-14 weeks depending on pipeline complexity and service integration.

Reviews

Independent reviews from Clutch, GoodFirms and Google - verified client feedback on our software projects

Based on 10 verified client reviews

Frequently asked questions

Type to filter questions and answers. Use Topic to narrow the list.

Showing all 5

No matches

Try a different keyword, change the topic, or clear filters

-

SageMaker saves 3-6 months of infrastructure engineering for training, serving and monitoring. Build custom only if you need extreme optimization (sub-5ms latency), unusual hardware configurations or want to avoid vendor lock-in.

For 90% of enterprise ML workloads, SageMaker is the right choice.

-

Bedrock adds enterprise features - VPC isolation, IAM access control, model invocation logging, Guardrails (content filtering) and Knowledge Bases (managed RAG). Direct API calls are simpler but lack governance.

Choose Bedrock when security, compliance and multi-model access matter.

-

We use spot instances for training (70% savings), Inferentia chips for inference (40% savings vs GPU), auto-scaling endpoints with scheduled scaling, SageMaker Savings Plans and right-sizing instance types based on actual workload patterns.

-

Yes. SageMaker, Bedrock, Comprehend Medical, Textract and other services are HIPAA eligible.

We configure VPC isolation, encryption at rest and in transit, CloudTrail audit logging and IAM fine-grained access controls for regulated workloads.

-

Bedrock integration MVPs start from $35,000-$60,000. SageMaker pipeline projects range from $50,000 to $150,000.

Enterprise ML platforms with multi-service integration cost $100,000 to $300,000+. AWS infrastructure costs are additional.

Choose your cooperation model

Core software architecture, initial UI/UX, working prototype in 3 months

Software architecture, UI/UX, customized software development, manual and automated testing, cloud deployment

Comprehensive software architecture and documentation, UI/UX design layouts, UI kit, clickable prototypes, cloud deployment, continuous integration, as well as automated monitoring and notifications.

Prices vary based on project scope, complexity, timeline and requirements. Contact us for a personalized estimate.

An approach to the development cycle

-

Team Assembly

Our company starts and assembles an entire project specialists with the perfect blend of skills and experience to start the work.

-

MVP

We’ll design, build, and launch your MVP, ensuring it meets the core requirements of your software solution.

-

Production

We’ll create a complete software solution that is custom-made to meet your exact specifications.

-

Ongoing

Continuous Support

Our company will be right there with you, keeping your software solution running smoothly, fixing issues, and rolling out updates.

Partnerships & Awards

Recognized on Clutch, GoodFirms and The Manifest for software engineering excellence

Build with AWS AI

90+ engineers ready to deliver your AWS AI project on time and within budget

What happens next?

-

Contact us

Contact us today to discuss your project. We’re ready to review your request promptly and guide you on the best next steps for collaboration

Same day -

NDA

We’re committed to keeping your information confidential, so we’ll sign a Non-Disclosure Agreement

1 day -

Plan the Goals

After we chat about your goals and needs, we’ll craft a comprehensive proposal detailing the project scope, team, timeline and budget

3-5 days -

Finalize the Details

Let’s connect on Google Meet to go through the proposal and confirm all the details together!

1-2 days -

Sign the Contract

As soon as the contract is signed, our dedicated team will jump into action on your project!

Same day

Our offices

Headquarters in Las Vegas, Nevada. Engineering office in Kyiv, Ukraine.